Overview of This Article

- The scope of this article discusses the fundamentals of implementing and maintaining a Tulip table model that is: performant, reliable, composable, and scalable.

- The purpose of this article is to provide the following:

- Recommendation to use Tulip Library table assets as a starting point for typical use cases

- Best practices for design of additional tables

- Building performant table queries

- Planning and implementing a table record archive strategy

Audience: App builders, solution architects, data architects, data platform resources

Key concepts

- Tables are a foundational component of any Tulip solution and one of many data sources within your organization.

- A well-designed table model enables a solution that is performant, composable, and scalable.

- Tulip Library assets give customers a head start with a common data model.

- Tables have certain limitations — some by design (e.g., rate limiting) and some by practice (e.g., record count thresholds).

- Designing performant queries and archiving older Table Records in high-volume tables (e.g., > 10 million records) helps maintain a responsive solution, directly supporting operator productivity and user experience.

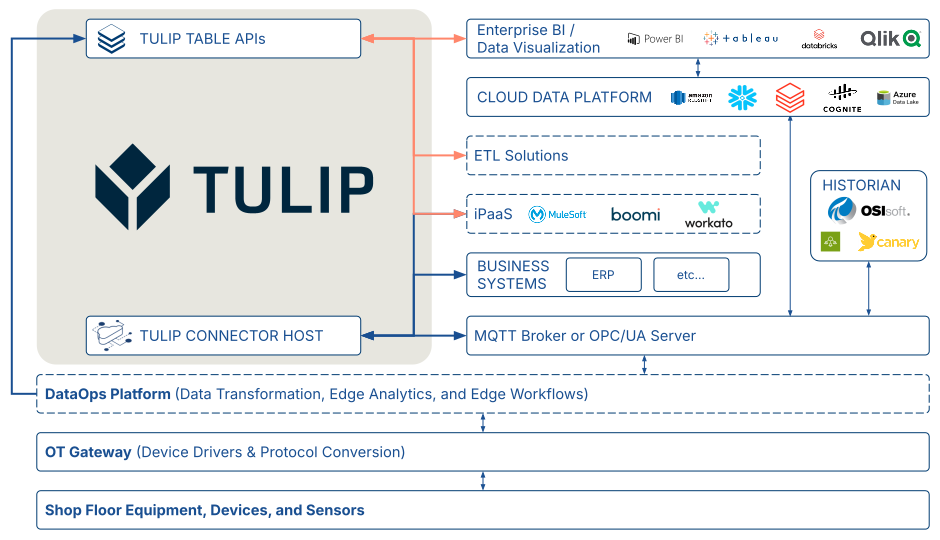

Typical Tulip position in enterprise data flow

As a frontline operations platform designed to serve as a single pane of glass, your Tulip solution hosts critical manufacturing data and integrates with other data sources across the enterprise. Consider the architecture diagram below. Your Tulip solution may interact with enterprise business systems via Connector Functions, and with shop floor equipment via an MQTT broker or OPC UA server configured as your Machine Data Source. Your iPaaS, ETL solution(s), and enterprise data visualization tools may interact with your Tulip solution via Table APIs.

Tulip resources for common data model

Tulip provides several resources to accelerate solution development with a common data model built around Tables. For more information, see:

Additional articles on common data model

- Common Data Model

- Example: Use the common data model for discrete use cases

- Example: Use the common data model for pharma use cases

Tulip Library resources on common data model

Table optimization for scalability

Best practices for scalable table design

The tables in the Tulip Library Common Data Model are built around the best practices described below. These tables — and the principles behind them — serve as a practical guide for designing tables that remain scalable and usable in Tulip logic over the long term.

Make tables horizontally-scalable (tall not wide).

Consider a Bill of Materials table. A new Tulip app builder might be inclined to create a "wide" table with a separate Number column for each component in an assembly. While this may seem straightforward in the short term, it quickly complicates query reuse, burdens trigger logic, and limits the table's applicability to other assemblies.

In the Bill of Materials (BOM) table below, columns represent the parent and component of an assembly. If an assembly has five components, the table contains five records. This structure supports an n-level hierarchy, since a subassembly can act as a "Parent" in one relationship and a "Component" in another.

Note also that this table design is both product-agnostic and industry-agnostic — it could store the BOM for anything from a meal to a commercial aircraft.

Recommended structure for a Bill-of-Materials table

| Label | Field Type | Description |

|---|---|---|

| ID | Text | Required: Unique identifier |

| Parent Material Definition ID | Text | Unique identifier of the parent assembly's material definition |

| Parent Material Description | Text | Description of the parent material |

| Component Material Definition ID | Text | Unique identifier of the component material definition |

| Component Material Description | Text | Description of the material to be assembled or consumed |

| Component Quantity | Number | Quantity of the material to assemble or consume |

| Component UoM | Text | Unit of measure for the component |

| Point of Use | Text | Location, operation, or step where the material will be assembled or consumed |

Avoid very wide tables

While the Tulip platform supports up to 200 columns, a table exceeding approximately 50 columns may be a signal that the underlying data model needs to be reconsidered for long-term scalability.

Avoid storing too many distinct "things" in a single table

In this context, a "thing" refers to a use-case-specific type of data entity. For example, an "Equipment & Assets" table might store context for both shop floor equipment and tools requiring calibration tracking. While it can be practical to store different types of entities in the same table, doing so is most appropriate when:

- Record ID usage is consistent across entity types.

- In the "shop floor equipment" and "calibrated tools" example, the record ID is commonly the company asset tag.

- The different entity types share approximately 50% or more of the same columns.

- If the different types of things populate very few of the same columns, it is likely a better fit to store them in separate tables.

- There is no one-to-many relationship between entity types.

- For example, a Batch may contain many Work Orders. Even if Work Orders and Batches share more than 50% of the same columns — and the ID column serves as either a Work Order ID or a Batch ID — the one-to-many relationship between them can complicate Query design and Trigger logic, resulting in a table model that is unintuitive for both solution builders and data consumers.

- Consensus among stakeholders.

- The solution developers and users of the data generally agree the different entity types are fundamentally similar (e.g. 'shop floor equipment' and 'calibrated tools').

Practical limitations of Tables

Rate limits

Tulip applies rate limits to assets to provide a consistent and reliable experience for users with respect to:

- Record writes

- Record deletes

Read more about table rate limits here.

Record count limits

The Tulip platform does not enforce a hard limit on the number of records in a Table; however, a practical limit exists depending on how the table is used. As record counts grow, it is common to see performance degradation in queries and aggregations, which can affect app reliability, operator experience, and shop floor productivity.

There are documented cases of Tulip customer production apps operating on tables exceeding 10 million records with negligible performance impact attributable to record count alone.

Table optimization for reliability and performance

Minimize use of Linked Records

In earlier versions of the Tulip platform, tables with multiple linked record columns and more than one million records exhibited significant performance issues. Platform improvements have substantially addressed this, but it remains a best practice to minimize or mitigate the use of linked records for several reasons:

- Performance at scale

- Extraction of table records via APIs or CSV download

- Known feature limitations (e.g., inability to filter an embedded interactive table based on a column from a linked table)

Building performant queries

Query performance depends on your filter type(s)

| Filter type | Performance at Scale | Notes |

|---|---|---|

| equals | Good to Excellent | Excellent for exact matching on Text columns. Use "equals" filters whenever possible over other filter types. |

| starts with | Good | |

| is in | Fair to Good | Best with a short list (< 10 items). Performance decreases as the list grows. |

| is null | Fair to Moderate | Queries relying solely on this filter can be slow if most records have null values in the column. |

| is not null | Fair to Moderate | Conversely, if most records are null, this query can return results very quickly. |

| ends with | Fair to Moderate | Case-insensitive. Performance degrades with large result limits and high record counts. |

| contains | Fair to Moderate | Case-insensitive. Performance degrades with large result limits and high record counts. |

| does not equal | Poor to Fair | |

| is not in | Poor to Fair | |

| does not start with | Poor to Fair | |

| does not end with | Poor to Fair | |

| does not contain | Poor to Fair |

Query performance depends on your count of filters

When designing queries, aim for the right balance: enough filters to return precise results while keeping the query reusable across similar use cases. It is uncommon to need more than three or four filters.

If you are building a query with many filters, it may indicate that your table model lacks scalability and would benefit from a partial redesign.

Always set an appropriate query limit

Set a LIMIT on every query, using the smallest value that satisfies your use case:

- Checking whether at least one matching record exists: 1 record

- Reporting: Consider using Analytics instead

- Other use cases: Consider the practical maximum number of records that would be retrieved or evaluated based on the query filters used, and use that number as the LIMIT (if less than 1,000).

Why this matters:

A query using the default limit of 1,000 will attempt to retrieve up to 1,000 matching records — even when only a handful are needed. Unnecessarily large limits consume time and resources in use cases where a smaller result set would suffice.

Table Record archiving strategy fundamentals

For the purposes of this discussion, "archiving" refers to the process of extracting records from Table(s), loading them into a cloud data platform via an ETL solution (e.g. an iPaaS or data integration platform), and then deleting those records from the Table(s).

Background and recommendation

- Tulip does not enforce a hard limit on the number of records that can reside in Tables.

- Tulip recommends keeping individual table record counts to a few million or fewer, as query performance can degrade as record counts grow.

- Performance issues are compounded by the use of linked records and poorly designed queries.

Recommendation:

Maintain Table record counts below 10 million by periodically moving older records to a cloud data platform.

Planning and implementing table record archiving

- Define the archiving criteria — determine the query filters that identify records eligible for archiving in each table.

- Example: For a "Station Status History" table, you might archive all records dated before the first of the previous month.

- Identify the destination — select your target cloud data platform (e.g., Redshift, Snowflake, Databricks, etc...).

- Select an ETL solution — choose a data integration platform, third-party iPaaS, or custom Python scripting to perform the record moves.

- Perform an initial bulk migration — move all records meeting the criteria defined in step 1 to the destination identified in step 2, in batches.

- Schedule recurring incremental migrations — set up an automated process to perform similar record moves on an ongoing basis. A common best practice is to perform such record moves on a very frequent basis, e.g. every 15 minutes for tables updated throughout a typical work day.

For more detailed guidance on 4 and 5, see ‘Best practices on archiving table records’ below.

Additional resources on ETL options:

- Data Integration Platforms

- Cloud Data Platforms

Best practices on archiving table records

Use the Sequence Number as a Cursor

When paginating through records, use the sequence number as your cursor rather than offset-based pagination.

Why offset pagination breaks: If records are inserted or updated while your sync is running, offset-based pagination can skip or duplicate records. Sequence number-based cursors are stable — sequenceNumber > last_seen_seq always gives you the correct next page regardless of concurrent writes.

Syncing a table happens in two phases: a one-time bulk load to load all historical records, followed by recurring incremental migrations to capture changes. Both should use the sequence number as a cursor.

Initial Bulk Load then Incremental Migrations

Before running incremental syncs, perform a one-time bulk load — a full load of all historical records. This gives your destination a complete baseline.

Pattern:

- Start with

last_seq = 0(or last saved checkpoint if resuming an interrupted bulk load) - Fetch pages sorted by sequence number ascending

- Write each page to the destination

- Save a checkpoint every ~500 records

- Stop when a page returns no records

Respect Rate Limits and Use Exponential Backoff

The Tulip Tables API enforces rate limits on all requests. There are two layers to handling this correctly:

Proactive throttling — space your requests to stay within the limit from the start, rather than waiting to be rejected

Reactive backoff — when a 429 is received despite throttling (e.g. due to other concurrent processes sharing the same workspace API quota), back off your GET frequency and retry.

See the Tulip API Rate Limits guide for the current limits. Do not hard-code limit values in your ETL solution — use a named constant so they are easy to update if limits change.

Exclude Linked Record Fields Using the Fields Filter

Tables support linked record fields (tableLink type) — fields in one table that reference records in another. When a tableLink field is included in a records response, the Tulip API has to resolve those references server-side, which creates cascading database queries. On tables with many linked fields or large record counts, this places significant load on the database and affects all users of the workspace.

The fix: use the fields query parameter to explicitly list the fields you want returned, and exclude tableLink fields entirely. This requires a small amount of upfront work — you need to fetch the table schema first to know which fields to include — but it is the correct approach and results in faster, lighter queries.

Checkpoint-Based Error Recovery

Network failures, timeouts, and unexpected errors will happen. Design your connector to recover gracefully without restarting from scratch.

Pattern:

- Persist the last successfully processed sequence number to a durable store after every ~500 records

- On restart, load the checkpoint and resume from seq > checkpoint_seq

- Write records using upsert logic (not inserts) in the destination so re-processing records from the checkpoint is safe

Did you find what you were looking for?

You can also head to community.tulip.co to post your question or see if others have solved a similar topic!